Before you build study failure

In Calgary, I was the head of design on a large enterprise project, upstream planning software for oil and gas. 50 developers. Multiple product managers. A VP leading the effort.

It failed.

Not because of bad engineers or bad technology. We never studied what risks could come along the way. The VP, the PMs, myself — none of us looked at what problems similar projects had faced or what we should watch for.

Before your team starts the next big project, ask yourself: what are the risks we can predict? What killed projects that looked like ours?

I learned this the hard way. If nobody on your leadership team can answer that, you are doing what 99.5% of product teams do. You are guessing.

The most critical gap I see in product teams today is not whether they think about risk. It is when they think about it — and what they compare it to.

In today's newsletter:

Why the inside view is killing your project before the first sprint

What Hong Kong's $11 billion rail disaster and Netscape's rewrite have in common

Why your product and design team should own risk conversations

How to use Grok and Claude this week to study what killed projects like yours

Why Every Product Team Sees Only Half the Picture

Nobel Prize winner Daniel Kahneman identified two ways people make predictions.

The inside view is what every product team does naturally. You look at your team, your timeline, your tech stack. "We have strong engineers." "We have done this before." "This time is different."

The outside view asks one question: what happened to projects like ours?

In Calgary, the leadership team took the inside view. We looked at our architecture, our resources, our technology. Nobody looked outside. Nobody searched for "why do enterprise software rebuilds fail."

I have seen this pattern in every company I have worked in. The leadership team plans from hope, not from data.

According to McKinsey's study of 5,400 IT projects, only 0.5% met all three success targets — on time, on budget, delivering intended value.

0.5%.

The average large IT project runs 45% over budget, 7% over time, and delivers 56% less value than predicted.

Your Product Team Sees the Risk First

You might think risk forecasting is the engineering lead's job. Or the PM's job.

According to my experience, the product and design team is better positioned to lead this.

When I create the information architecture — every screen, every component, every user flow — I am the first person who sees the real scope. The full complexity. The dependencies.

That is the moment to ask: what killed projects that looked like ours?

In Calgary, I built the information architecture. I could see the scope. But I never asked the risk question. The product and design team owns the scope. And scope is where the biggest risk lives.

Information architecture for asset modeling

Reference Class Forecasting

Flyvbjerg calls it Reference Class Forecasting. Three steps:

Identify a class of past, similar projects. Your full product rewrite? Look at what happened to Netscape.

Analyze what actually happened. Where is the most rik.

Compare your project to the reference class. Adjust your estimates from reality, not from hope.

Before AI, this research meant weeks of work or hiring a consultant. That is why nobody did it. Now you can do it in one afternoon.

How AI Makes It Practical

Grok for research

This is where you find your reference class. In my experience, Grok gives the strongest research results — it pulls from real sources with citations you can verify.

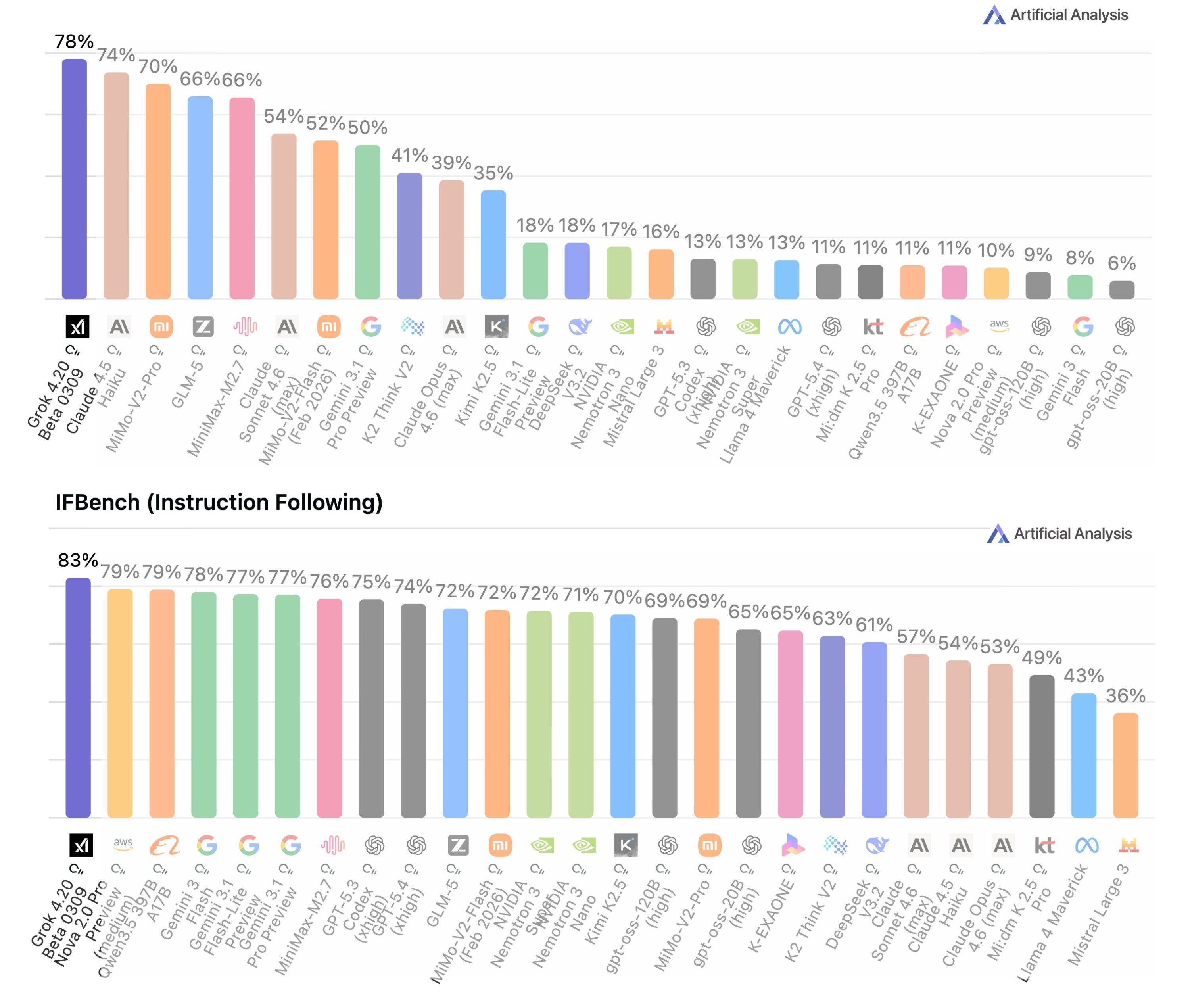

The 1st benchmark: hallucination. The 2nd benchmark: instruction following.

The prompts are straightforward:

"What are the most common reasons enterprise software rebuilds over $5 million fail?"

"Show me case studies of SaaS companies that did full product rewrites. What happened?"

"What does the Standish Group CHAOS report say about IT project success rates?"

One afternoon. One tool. You now have the outside view.

Claude for synthesis and pre-mortem

Once you have the reference class data, bring it into Claude. Ask it to identify patterns: "Based on these case studies, what are the top risk factors for a project like ours?"

Then run a pre-mortem: "Here is our project scope, timeline, and team size. Based on what happened to similar projects, what are the most likely ways this fails?"

Now your pre-mortem is grounded in real data from real failures.

Not guesses.

Grok finds the reference class. Claude helps you apply it.

One afternoon to 2 days max, no external research team needed.

Key Takeaway

Your project is probably not unique. Only 0.5% of large IT projects hit all three targets. The reference class does not lie.

Reference Class Forecasting: Identify similar past projects. Analyze what actually happened. Compare your project to the baseline.

Your product and design team sees the full scope first. That makes them the right people to raise the risk question.

AI makes the outside view practical. Only two software; Grok and Claude can replace weeks of research.

AI Tools I'm Using This Week

🔍 Grok — For reference class research. The strongest tool for finding failure patterns and case study data with verifiable citations.

💬 Claude — CoWork feature for synthesizing research and running pre-mortems grounded in the outside view.

🎙️ Wispr Flow — This changed my life. I no longer write; I only speak. Speaking instead of writing is four times faster. You can start with the free tool as well.

Start learning AI in 2026

Everyone talks about AI, but no one has the time to learn it. So, we found the easiest way to learn AI in as little time as possible: The Rundown AI.

It's a free AI newsletter that keeps you up-to-date on the latest AI news, and teaches you how to apply it in just 5 minutes a day.

Plus, complete the quiz after signing up and they’ll recommend the best AI tools, guides, and courses — tailored to your needs.

That's all for this week. See you in the next one.

P.S. If your team is about to kick off something big, reply to this email. I would love to work with you on your risk strategy before the first sprint starts.